TL;DR:

- AI web applications differ from traditional apps by responding to context and learning over time.

- Robust architecture requires an orchestration layer to manage AI unpredictability and ensure governance.

- Success depends on organizational maturity, governance, and continuous evaluation beyond technical model performance.

Most business leaders assume that deploying AI in web applications is fundamentally a technology problem, one solved by selecting the right model, hiring skilled engineers, and writing clean code. That assumption is costly. Recent architectural research confirms that production AI web apps require a disciplined orchestration layer and strict contracts between system components, meaning the path to real business value runs through governance, evaluation, and organizational readiness just as much as it runs through code.

Table of Contents

- What sets AI web applications apart from traditional web apps

- Core architectural principles for robust AI web applications

- Evaluating AI web applications: Beyond code correctness

- Governance and IT maturity: The real drivers of enterprise AI success

- How enterprises apply AI web applications: Real-world scenarios

- Why conventional approaches to AI web apps miss the mark

- Next steps: Partnering for successful AI web application development

- Frequently asked questions

Key Takeaways

| Point | Details |

|---|---|

| Architecture matters most | A production-ready AI web app needs a dedicated orchestration layer and secure contracts instead of direct model calls. |

| Measure real user impact | Effective evaluation goes beyond code to test usability, task completion, and real-world functional success. |

| Governance drives success | Enterprise AI adoption depends more on IT maturity and governance than on model performance metrics alone. |

| Start with business goals | Successful projects begin by aligning AI capability with clear organizational outcomes and readiness. |

What sets AI web applications apart from traditional web apps

To see why AI web applications are not just an upgrade but a fundamental shift, let’s break down their unique features compared to traditional web solutions.

Traditional web applications follow predictable, rule-based logic. A user clicks a button, data is retrieved from a database, a response is rendered. The process is deterministic and auditable. AI web applications introduce a fundamentally different dynamic: they respond to context, learn from interaction patterns, generate outputs that vary based on probabilistic reasoning, and adapt over time without manual reprogramming.

This distinction has major strategic implications for how you architect, evaluate, and govern these systems. Understanding AI in business growth begins with recognising that AI-enabled web apps are not simply smarter versions of existing tools. They are a different category of software entirely.

Key components that distinguish AI web applications from their traditional counterparts include:

- Large Language Models (LLMs): These are the reasoning engines behind AI web apps. They process natural language, generate content, interpret instructions, and facilitate intelligent dialogue. However, they introduce non-determinism, meaning identical inputs can yield different outputs.

- Orchestration layers: This is the critical middleware that coordinates communication between your business logic, your data sources, and the AI model. It enforces contracts, manages retries, and shields core APIs from unpredictable AI behaviour.

- Dynamic interaction models: Unlike static forms or rule-based chatbots, AI web applications can engage users in multi-turn conversations, adapt recommendations in real time, and escalate to human agents when needed.

- Context management systems: These maintain state across user sessions, enabling AI to remember preferences, track task progress, and deliver coherent experiences over time.

- Integration with enterprise data: AI web apps derive their business value from access to proprietary data, whether customer records, product inventories, or operational logs.

Pro Tip: Never allow your front-end or core business APIs to call an LLM directly. The orchestration layer is not optional infrastructure. It is the mechanism that keeps your application reliable, auditable, and aligned with business rules under all conditions.

The strategic value of this architecture is significant. When designed correctly, AI web applications can automate high-volume decision-making, personalise customer journeys at scale, surface operational insights in real time, and reduce the manual burden on knowledge workers across departments.

Core architectural principles for robust AI web applications

Defining what makes AI web applications unique sets the stage for understanding the architecture required to make them truly reliable and scalable for business.

The single most important architectural decision you will make is the design of your orchestration layer. As production-grade AI architecture principles confirm, LLMs must never be called directly inside core business APIs. The reason is straightforward: LLMs are stateless, probabilistic services. They have latency variability, rate limits, versioning changes, and potential for unexpected outputs. Calling them directly from business-critical APIs introduces fragility, traceability gaps, and compliance risk.

The orchestration layer solves this by acting as a contract-enforcing middleware. It validates inputs before they reach the model, enforces output schemas, handles failures gracefully, and logs every interaction for audit purposes.

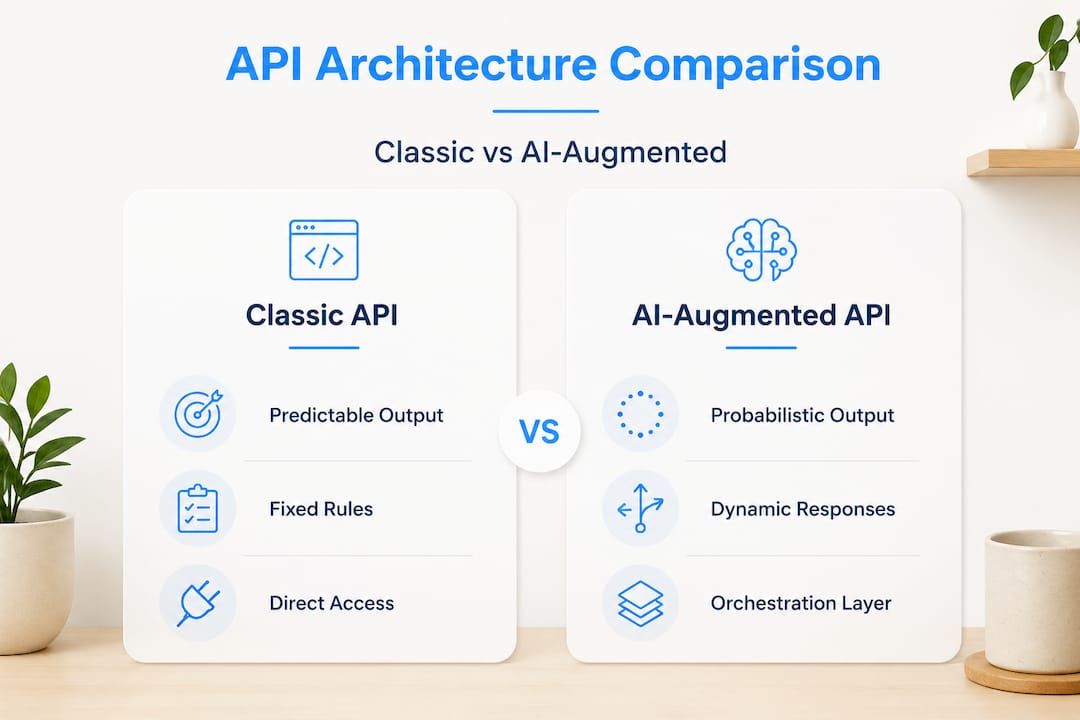

Here is a direct comparison of classic API design versus AI-augmented API design to illustrate the critical differences:

| Dimension | Classic API | AI-augmented API |

|---|---|---|

| Output predictability | Deterministic | Probabilistic, requires validation |

| Failure handling | Standard error codes | Requires fallback chains and retries |

| Latency profile | Consistent, milliseconds | Variable, can exceed seconds |

| Audit trail | Native in logs | Requires explicit orchestration |

| Scalability | Horizontal scaling | Dependent on model provider limits |

| Governance | Straightforward | Requires prompt versioning and model pinning |

Building smarter digital solutions at an enterprise level requires you to account for every row in that table. The orchestration layer must also implement observability, meaning you should be able to trace exactly which prompt version produced which output, for which user, at which timestamp. Without this, debugging AI behaviour in production becomes a significant operational challenge.

Pro Tip: Treat your orchestration layer like a regulatory compliance boundary. Every AI output that influences a business decision should be logged, versioned, and retrievable. This protects you legally, operationally, and reputationally.

Two further architectural considerations deserve attention. First, prompt versioning: your prompts are effectively part of your application’s codebase and must be version-controlled just like source code. Second, model pinning: where possible, pin your application to a specific model version to prevent unexpected behaviour changes when providers update their models.

Evaluating AI web applications: Beyond code correctness

With robust architecture in place, how should organisations judge if their AI web apps actually perform well for users and meet business objectives?

This is where many enterprises make a critical error. They measure success through technical metrics alone: uptime, response time, test coverage, and error rates. These metrics matter, but they tell only part of the story. Emerging evaluation frameworks for AI-generated web applications go beyond code correctness to measure interactive usability and functional success across real user workflows.

Research initiatives like AppBench and WebGen-Bench represent a significant evolution in how AI web app quality is assessed. Rather than checking whether code compiles or whether a component renders correctly, these frameworks evaluate whether an AI-powered application can successfully guide a user through a complete task, manage state transitions accurately, and recover gracefully from unexpected inputs.

“Evaluation should include both end-user interaction quality and real-world proxy validity.” This principle from recent evaluation research reframes the entire quality assurance conversation for enterprise AI applications.

What does this mean in practice? Your evaluation framework should track AI website growth metrics alongside the following dimensions:

| Metric category | What it measures | Why it matters |

|---|---|---|

| Functional task completion | Did the user achieve their intended goal? | Directly reflects business value delivered |

| State transition accuracy | Does the app maintain correct context across steps? | Prevents costly user errors and drop-offs |

| Usability under failure | How does the app behave when AI outputs are incorrect? | Determines real-world resilience |

| Recovery rate | Percentage of failed interactions successfully recovered | Indicates robustness of fallback design |

| End-user satisfaction | Qualitative feedback on interaction quality | Captures value not visible in technical logs |

To build a rigorous evaluation programme, your team should prioritise:

- Defining task scenarios that mirror actual business workflows, not synthetic test cases

- Recruiting end users from target departments to participate in structured usability assessments

- Measuring functional success rates across diverse user profiles and device contexts

- Tracking UI/UX metrics longitudinally to detect degradation over time

- Incorporating web usability standards to ensure accessibility and interaction quality meet enterprise requirements

Pro Tip: Set a baseline functional success rate before your AI web app goes live. Without this baseline, you cannot objectively determine whether subsequent model updates or prompt changes have improved or degraded the user experience.

Governance and IT maturity: The real drivers of enterprise AI success

Even with proven performance, AI web applications can fail at scale if governance and organisational readiness are overlooked.

Forrester’s research on enterprise AI readiness makes a definitive statement: AI agent readiness should be treated as an IT capability maturity and governance problem, not a model-performance problem. This insight reorients where enterprise leaders should focus their attention and investment.

Most AI adoption failures do not occur because the model was insufficiently capable. They occur because the organisation lacked the data governance infrastructure to feed the model reliably, the change management processes to align teams around new workflows, or the security frameworks to manage the expanded risk surface that AI introduces.

To assess your organisation’s readiness for AI web application deployment, work through the following checklist systematically:

- Data quality and governance: Are your enterprise data sources clean, structured, and accessible through secure APIs? AI models are only as reliable as the data they process.

- IT capability maturity: Does your team have experience managing probabilistic systems? Traditional IT operations skills do not automatically transfer to AI systems management.

- Security and access controls: Have you extended your security policies to cover AI-specific attack vectors, including prompt injection, data leakage through model outputs, and API abuse?

- Accountability frameworks: Is there a named owner for each AI-driven decision process? Accountability must be assigned before deployment, not after an incident.

- Tech debt assessment: Legacy systems that are not API-ready create integration risk. Identify these before the AI project begins, not during it.

- Change management planning: Have operational teams been prepared for new workflows? User adoption is as critical as technical deployment.

Supporting AI growth in business at the enterprise level requires structured decision making frameworks that treat governance as a first-class deliverable, not an afterthought.

Pro Tip: Run a governance readiness workshop before your AI web application development begins. Identify data owners, security stewards, and business accountability leads early. This single step prevents the majority of enterprise AI deployment failures.

How enterprises apply AI web applications: Real-world scenarios

Strong governance and mature IT set the foundation. Here is how successful enterprises are putting AI web applications into practice.

The practical applications span virtually every business function, and the most effective implementations share a common trait: they are tightly scoped, deeply integrated with existing business processes, and measured against functional outcomes rather than technical novelty.

Consider these representative scenarios:

- Marketing personalisation: AI web applications analyse customer behaviour in real time and serve dynamic content variations, product recommendations, and personalised messaging without manual segmentation. The impact on AI for digital marketing outcomes is measurable and significant, particularly in conversion rate optimisation.

- Customer service automation: Intelligent web portals that handle tier-one support queries, route complex issues to appropriate agents, and summarise interaction histories reduce average handle time and free skilled staff for higher-value work.

- Operations and procurement: AI-augmented web dashboards that flag anomalies in supply chain data, recommend procurement actions, or automate approval workflows reduce decision latency across operational teams.

- Compliance and risk management: AI applications that review documents, flag regulatory inconsistencies, and maintain audit trails reduce compliance exposure while accelerating review cycles.

Newer evaluation research confirms that functional success rates for AI-generated web apps vary significantly based on task complexity, reinforcing why scoping your pilot carefully is essential to demonstrating early value.

A practical pilot framework should follow these steps: define a single, high-value use case with clear success metrics; assess data availability and quality for that specific use case; design the orchestration and evaluation architecture; deploy to a controlled user group; measure functional success rates and usability outcomes; and only then plan broader rollout. Strong UX design and AI apps integration from the earliest prototype stage significantly improves adoption rates and reduces rework costs later in the delivery cycle.

Why conventional approaches to AI web apps miss the mark

Here is the uncomfortable truth that most AI strategy conversations avoid. The enterprise technology market has been conditioned to treat AI capability as a function of model quality. Leaders ask which model is most accurate, which vendor has the largest context window, and which benchmark score is highest. These questions are legitimate, but they are secondary to the questions that actually determine whether an AI web application delivers business value.

Forrester’s guidance is unambiguous: enterprise AI readiness is an IT maturity and governance challenge. Organizations that invest heavily in premium AI models while neglecting data governance, orchestration architecture, and cross-functional change management consistently report disappointing outcomes. The model performs adequately. The system fails the business because the surrounding infrastructure was not ready to support it.

The second undervalued lever is user-centric evaluation. Most enterprise AI projects are evaluated by the team that built them, using metrics the team defined, against scenarios the team designed. This approach systematically misses the friction points that real users encounter, the edge cases that matter in production, and the gaps between what the system was designed to do and what users actually need it to do.

What should leaders prioritise instead? First, invest in governance infrastructure before model selection. Second, build cross-functional evaluation teams that include end users, compliance leads, and operations managers alongside technical architects. Third, measure functional success in production continuously, not just at go-live. Fourth, treat digital solutions with AI as an iterative capability, not a one-time project delivery.

The organisations that generate sustained value from AI web applications are not those with access to the most sophisticated models. They are the organisations that have built the governance, evaluation, and architectural discipline to deploy AI responsibly and improve it continuously.

Next steps: Partnering for successful AI web application development

Ready to move beyond strategy and into execution? The gap between knowing what good AI web application architecture looks like and actually building one is where the right development partner makes all the difference. At CloudFusion, we specialise in custom AI web development that integrates robust orchestration layers, governance-ready architectures, and user-centric evaluation from the earliest design phase. Whether your organisation needs a standalone AI-powered web portal or a fully integrated enterprise platform, our team also delivers end-to-end mobile application development to extend your AI capabilities across every channel. Start with clarity on scope, architecture, and business objectives by completing a request a web development quote today.

Frequently asked questions

What is an AI web application?

An AI web application combines traditional web functionality with artificial intelligence, such as machine learning or large language models, to deliver smart automation, personalisation, or insights. Critically, production-grade implementations require an orchestration layer between the AI model and core business logic.

How do AI web applications benefit businesses?

They enable organisations to automate complex tasks, personalise customer experiences at scale, and integrate intelligent decision-making into existing web workflows. However, realising these benefits requires treating enterprise AI readiness as a governance and IT maturity challenge, not solely a technical one.

What are the risks of using AI in web applications?

Risks include unreliable AI outputs, security vulnerabilities such as prompt injection, governance gaps, and compliance exposure. Addressing these requires mature IT processes, strict access controls, and accountable oversight structures, as Forrester’s CIO guidance strongly emphasises.

How is success measured for AI web applications?

Success is measured across both technical and business dimensions, including functional task completion rates, usability quality, and state transition accuracy. Emerging evaluation frameworks demonstrate that interactive usability and real-world task success are as important as code-level correctness.

What are the first steps to implement a custom AI web application?

Begin with a governance readiness assessment, define specific business objectives, and establish your evaluation criteria before selecting any technology. Engaging an experienced partner early ensures that enterprise AI readiness considerations are built into the architecture from the start, not retrofitted after deployment.